Difference between revisions of "Eye Tracking for ALS Patients"

| Line 6: | Line 6: | ||

Project Poster: [[File:UGR_cubsat2_poster_SP11.ppt|Download]] | Project Poster: [[File:UGR_cubsat2_poster_SP11.ppt|Download]] | ||

| − | |||

| − | |||

===Project abstract=== | ===Project abstract=== | ||

| Line 24: | Line 22: | ||

Second Phase: building the hardware necessary to capture the images of the eye and transfer the images to a processing unit. | Second Phase: building the hardware necessary to capture the images of the eye and transfer the images to a processing unit. | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

source code (zipped)-[[File:Cubesat_compression_source_SP11.zip]] | source code (zipped)-[[File:Cubesat_compression_source_SP11.zip]] | ||

| Line 47: | Line 37: | ||

===Results=== | ===Results=== | ||

| − | + | ||

| + | [[File:RESULT4.jpg|200px|RESULT]] | ||

| + | [[File:RESULT1.jpg|200px|RESULT]] | ||

| + | [[File:RESULT3.jpg|200px|RESULT]] | ||

| + | [[File:RESULT2.jpg|200px|RESULT]] | ||

| + | |||

| + | [[Media:RESULT2.jpg|200px|RESULT]] | ||

Revision as of 18:23, 19 December 2011

Contents

Eye Tracking-Project: Image Selection and Compression

This project is tackling the Image Processing component of the CubeSat project. In particular, dealing with selection of valuable of image data and the effective compression of that image data.

The Project

Project Timeline: Download

Project Poster: File:UGR cubsat2 poster SP11.ppt

Project abstract

The goal of this project is to examine the performance of different lossless compression algorithms and determine which one would be the most suitable for a cubeSat based communication system. As the cubeSat based communication system has both high data collection potential and relatively low data transmission throughput, optimal compression is a key performance bottleneck for the overall system performance. We select an optimal subset of the images that are generated using the IR camera mounted on a cubeSat and compress these subset of images. The compression algorithms we investigated and tested are run-length encoding, difference encoding, and Huffman coding. Initially, we implement these algorithms in MATLAB. Later, we will use the datasets developed in the first part of the cubeSat project.

Background

This research was conducted by Sana Naghipour and Saba Naghipour in the Fall 2011 Semester at Washington University in Saint Louis. It was part of the Undergraduate Research Program and taken for credit as the course ESE 497 under the Electrical and Systems Engineering Department. The project was overseen by Dr. Arye Nehorai,Ed Richter and Phani Chavali.

project overview

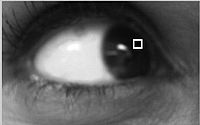

The Eye tracker project is a research effort to empower people, who are suffering from Amyotrophic Lateral Sclerosis (ALS), to write using their eyes by tracking the movement of the pupil. The project will be implemented in two main phases: First Phase: development of the software for pupil tracking Second Phase: building the hardware necessary to capture the images of the eye and transfer the images to a processing unit.

source code (zipped)-File:Cubesat compression source SP11.zip

note- the code is decently commented, however it may be a bit confusing. So, for simplicity one should run the script called "Compression test" this script should prompt the user for a image to process and then, given a valid filename perform an array of compression tests. After finishing it should display the results of the operations graphically.

Problems encountered

The main problems I ran into had to do with the fact that i was using matlab. It seems like the way matlab works, it needs to store the data as a contiguous array and there are no other supported abstract data types. Because of the high memory overhead for the algorithms, particularly the Huffman, I would often run out of memory when working with a large pixel bit size or a large image. I never really found a good solution to this problem. However, I expect this problem to be alleviated when using a different language. Another problem i encountered was matlab's "native" tree structure support. I found it hard to work with and somewhat lacking in functionality (in particular, it was hard to build a tree from the bottom up, which is required for Huffman). My solution was to write a small tree class function library.

Experimental setup

To test how effective each algorithm was at compressing a variety of images, I selected a few random picture and ran the compression algorithms for each one, with varying encoding element sizes. I then looked at which algorithm had the best compression performance, that is, the smallest compression ratio (compressed/original). I also ran the algorithms on images generated by code from Michael Scholl. those images were supposed to be somewhat representative of the kind of images we'll be getting.

Results

Future work

- analyze the expected time complexity and space complexity of the algorithms

- Implement the algorithms in a more general purpose language like C.

- Get hands on some hardware and attempt to run the code. examine factors like power consumption processing speed, etc.